May 19th, 2022

Authors and affiliations

Rebecca Cors, Wisconsin Center for Education Research

Christine Bell, Wisconsin Center for Education Research

Introduction

Many education program managers use traditional pretest-posttest (TPT) surveys to evaluate how people’s attitudes, awareness, and behaviors changed after they experience an interactive exhibit. However, surveying visitors both before and after they interact with an exhibit can be expensive and could drive people away. In many cases, using a single, post-intervention survey to ask for both pre- and post-experience ratings, called a retrospective pre-posttest (RPT), addresses these concerns. This blog describes a study that compared TPT and RPT approaches for evaluating a public space exhibit.

The take-away: If your informal education program lasts for a few days or less, is focused on individual perceptions of change, and probably will not make participants feel pressured to give socially desirable responses, RPT could be the best method for evaluation. It saves time and money, and is likely to give a more authentic picture of how people perceive changes.

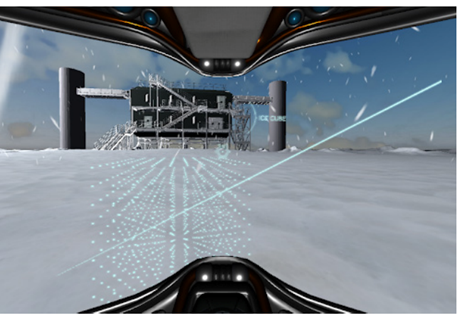

Background: An Exhibit about Research in Antarctica (IceCube)

In 2018, a research team was designing a study to evaluate the Polar Virtual Reality Exhibit (PVRE Exhibit) using National Science Foundation funding from the Advancing Informal Science Learning and Office of Polar Programs, NSF #1612504. This mobile exhibit stood in the first floor public space of the Discovery Building at the University of Wisconsin-Madison. It offered both virtual reality and interactive tabletop touchscreen games about an Antarctic research project called IceCube. The games lasted from two to four minutes. The exhibit study team wanted to learn about exhibit user experiences that could be generalized to other locations the exhibit might inhabit.

Challenges of Data Collection

The team wondered, How many survey responses should they collect in order to produce generalizable results? Based on previous experience with out-of-classroom education programs, team scientist and evaluator Rebecca Cors offered tips for determining an appropriate sample size. Based on these tips, the team determined that they should collect data from at least 120 exhibit users. Using a traditional pretest-posttest (TPT) survey survey approach, they would have to administer a total of 240 surveys, 120 before and 120 after the exhibit intervention. How likely were visitors to respond to both surveys? And could the survey deter visitors from trying out the exhibit?

Hoping to find a way to reduce surveying for the PVRE Exhibit study, Cors attended a presentation by colleague Christine Bell about using just one, post-intervention survey to ask participants about both pre- and post- intervention opinions. Bell’s workshop, which drew from a 2015 webinar from EvaluATE, explained how, in certain situations, data collected using RPT (retrospective pre-posttest) were as reliable and valid as data from TPT. Moreover, Bell explained, RPT offers advantages: mainly reduced cost, reduced burden for survey respondents, and reduced workload for data collection and analysis work.

From the Experts: Pros and Cons of RPT and TPT

To understand whether the RPT method would be appropriate for studying PVRE Exhibit outcomes, Cors reviewed the research literature about RPT that Bell shared during her presentation. One major concern experts share about using an RPT design is recall bias, or participants’ inability to accurately remember what they thought before an intervention. Experts agree that there is some degree of recall bias (i.e., distortion or degradation of memory) in all retrospective ratings. This bias increases as the length of recall time increases and seems to be greater for attitudinal than for behavioral and skill ratings. Given that the PVRE game lasted only for a few minutes, recall bias would be minimal.

Another concern about RPT is motivational bias, or participants’ desire to present their past self as better than they actually were or to justify their effort during the intervention. The literature suggests that RPT is more effective for studies that examine individual perceptions of change, which is just what the PVRE study aimed to do.

An additional drawback of a TPT (traditional pretest-posttest) design is response shift bias, in which participants characterize their awareness or behaviors more positively in the pretest than after an intervention. For example, participants in a mentoring skills improvement program might judge their behaviors more positively before the intervention than after completing the program, because during the program they develop new standards about what constitutes good mentoring practices. Therefore, using a TPT study design could underestimate visitors’ takeaways.

Moreover, a workload burden comes with employing a TPT design, because it takes time to match pre- and post- survey responses for each participant, which could introduce errors that reduce sample size. For example, a participant might leave a workshop early before completing a posttest, making their pretest rating unusable. By design, RPT participant pre- and posttest responses are collected together, allowing the data to be anonymous. Anonymous data collection can improve response rates and encourage candid feedback.

To the team’s knowledge, no studies to date had compared RPT with TPT for studying informal learning programs. So, the study aimed to study user experiences with the PVRE using an RPT approach and also to compare RPT and TPT.

Our Experiment Showed RPT is Ideal for Studying Many Informal Science Education Programs

Aims. The study compared RPT withTPT methodologies for measuring the change in visitors’ self-perceived basic understanding of a space exploration project in Antarctica, called IceCube, as a result of interacting with the tabletop touchscreen PVRE game.

Design. The survey item asked participants to rate their basic understanding of IceCube on a six-point scale, with endpoints labeled “none” and “expert.” TPT participants completed a pre-intervention survey about their basic understanding of IceCube using an iPad handed to them by a study team member. After playing the tabletop game about IceCube, they rated their basic understanding again, by answering an item in a survey embedded in the tabletop exhibit. RPT participants did not complete a pre-intervention survey. After they played the tabletop game, they completed both before and after items in a survey embedded in the tabletop exhibit.

Sample. There were 20 TPT participants and 79 RPT participants. About 60% of both groups were female, and 50%–80% were college students, aged 18–30 years.

Results. TPT and RPT sampling produced comparable results. According to a paired samples t-test, TPT respondents rated their basic understanding of IceCube at 1.9. This average rating increased significantly to 3.3 after playing the tabletop PVRE (TPT Mpre = 2.0, Mpost = 3.5, p > 0.001). Similarly, RPT responses showed nearly the same average rating for pre- (2.0) and post- (3.5) understanding and a significant increase after playing the game (RPT, Mpre = 1.9, Mpost = 3.5, p > 0.001). Also comparable were the effect sizes for these gains in basic understanding of IceCube (TPT η2 = 0.52; RPT η2 = 0.53), which suggest a medium to strong association between the exhibit intervention and exhibit users’ basic understanding of IceCube.

It was interesting to see how the pretest rating from TPT subjects (2.0) was slightly greater than the RPT pretest rating (1.9), which could be explained by response shift bias. This confirms what other researchers have found about how TPT can underestimate participant gains. Based on these results, RPT is a better measure of exhibit users’ gains in basic understanding of IceCube.

The main limitation of the comparison is the unequal sizes of the TPT and RPT sample groups. However, because subjects in each sample group were of similar age and because variance statistics (standard deviations) for each group were similar and small, the difference in group size was a minimal concern.

For the main study, using the RPT method enabled the study team to exceed its data collection goal of 120 visitor records in order to determine exhibit effectiveness. They collected responses from a total of 154 visitors, including some who used the tabletop game and some who played the virtual reality game.

Conclusion. The team concluded that RPT is ideal for evaluating many informal learning programs. These findings are evidence that data collected using an RPT approach are comparable with data from TPT for many informal science programs. In some cases, RPT may even be more valid (more accurately representing people’s gains from an intervention) than TPT.

Additional RPT Uses and Benefits

Since working on the PVRE study, Cors refers many program managers to the RPT approach. She has seen RPT used for programs that last up to a few weeks or even a few months. She sees RPT as a tool for asking participants for feedback about change, whereas TPT asks for two stand-alone ratings.

Bell finds the RPT approach supports more efficient use of resources, because data is less complicated to organize and analyze. For example, there is far less work needed to connect pre- and post- test ratings for each participant. Bell recently used RPT for the NIH-funded Scientific Communication Advances Research Excellence (SCOARE) program at MD Anderson project. One goal of the evaluation was to relay participant feedback about knowledge and skills gains to workshop facilitators quickly, so they could help participants in the areas that most challenge them. By using the RPT method, Bell could eliminate time-consuming data cleaning and matching and give facilitators useful formative feedback within days, so they had time to plan adjustments before the next workshop.

References and Resources

Dahlstrom, E.K., Bell, C., Chang, S., Lee, H.Y., Anderson, C.B., Pham, A., et al. (2022) Translating mentoring interventions research into practice: Evaluation of an evidence-based workshop for research mentors on developing trainees’ scientific communication skills. PLoS ONE 17(2): e0262418. https://doi.org/10.1371/journal.pone.0262418

EvaluATE Evaluation Resource Hub. 2 for 1 Retrospective Pre-Post Self-Assessment. National Science Foundation, https://atecentral.net/events/31144/2-for-1-retrospective-pre-post-self-assessment. Accessed 6 May, 2022

Hill, L.G., and Betz, D.L. (2005) Revisiting the retrospective pre-test. American Journal of Evaluation 26(4): 501–517.

Howard, G.S., and Dailey, P.R. (1979) Response-shift bias: A source of contamination of self-report measures. Journal of Applied Psychology 64(2): 144–150.

Robinson, S. (2017) Starting at the End: Measuring Learning Using Retrospective Pre-Post Evaluations by Debi Lang and Judy Savageau. AEA365 Blog. July 31, 2017. https://aea365.org/blog/starting-at-the-end-measuring-learning-using-retrospective-pre-post-evaluations-by-debi-lang-and-judy-savageau/

Tredinnick, R., Cors, R., Madsen, J., Gagnon, D., Bravo-Gallart, S., Sprecher, B., and Ponto, K. (2020) Exploring the universe from Antarctica – an informal STEM polar research exhibit. Journal of STEM Outreach 3. https://bit.ly/JSOTredinnick